In a previous post I took a first look at the Function Monkey library to define Azure Functions using commands and handlers.

In this post I’m going to try and take an existing functions app and convert it to the Function Monkey approach. I should note up-front that this post is not a criticism of the library itself, like everything it’s a work in progress, I may be misunderstanding some of the features :)

The Starting App

The non-function-monkey app creates the workflow: client—>HTTP function –> queue function –> blob function and looks like the following:

using System.Collections.Generic;

using System.IO;

using System.Threading.Tasks;

using Microsoft.AspNetCore.Http;

using Microsoft.Azure.WebJobs;

using Microsoft.Azure.WebJobs.Extensions.Http;

using Microsoft.Extensions.Logging;

using Newtonsoft.Json;

namespace FunctionApp2

{

public class WordsAdditionRequest

{

public IEnumerable<string> Words { get; set; }

}

public static class Function1

{

[FunctionName("AddWords")]

public static async Task AddWords(

[HttpTrigger(AuthorizationLevel.Function, "post", Route = null)] HttpRequest request,

[Queue("WordsToProcess")] IAsyncCollector<string> wordQueue,

ILogger log)

{

// validation/error checking logic omitted for brevity

log.LogInformation("C# HTTP trigger function processed a request.");

string requestBody = await new StreamReader(request.Body).ReadToEndAsync();

WordsAdditionRequest wordsAdditionRequest= JsonConvert.DeserializeObject<WordsAdditionRequest>(requestBody);

foreach (var word in wordsAdditionRequest.Words)

{

log.LogInformation($"Adding word '{word}'");

await wordQueue.AddAsync(word);

}

}

[FunctionName("ProcessWord")]

public static void ProcessWord(

[QueueTrigger("WordsToProcess")] string wordToProcess,

[Blob("uppercase-words/{rand-guid}")] out string uppercaseWord,

ILogger log)

{

log.LogInformation($"C# Queue trigger for word '{wordToProcess}'");

uppercaseWord = wordToProcess.ToUpperInvariant();

}

[FunctionName("AuditWordWritten")]

public static void AuditWordWritten(

[BlobTrigger("uppercase-words/{name}")] string uppercaseWord,

string name,

ILogger log)

{

log.LogInformation($"C# blob trigger - audit for word '{uppercaseWord}'");

}

}

}

Refactoring to Function Monkey

First install the NuGets: FunctionMonkey and FunctionMonkey.Compiler.

The next first step is to create a command, so we’ll change WordsAdditionRequest to:

public class AddWordsCommand: ICommand<int>

{

public IEnumerable<string> Words { get; set; }

}

So far so good, next we’ll need to create a handler for this command. I need the handler to process the command and return each of the strings so they can be added as separate messages to the queue, so we can fan-out the work:

internal class AddWordsHandler : ICommandHandler<AddWordsCommand, string[]>

{

public Task<string[]> ExecuteAsync(AddWordsCommand command, string[] previousResult)

{

return Task.FromResult(command.Words.ToArray());

}

}

The next thing is to create the configuration class and wire up the hander as a HTTP-triggered function. The initial attempt looks like this:

public class FunctionAppConfiguration : IFunctionAppConfiguration

{

public void Build(IFunctionHostBuilder builder)

{

builder

.Setup((serviceCollection, commandRegistry) =>

{

commandRegistry.Register<AddWordsHandler>();

})

.Functions(functions => functions

.HttpRoute("v1/AddWords", route => route

.HttpFunction<AddWordsCommand>(HttpMethod.Post)

.OutputTo.StorageQueue("WordsToProcess")

)

);

}

}

Now we have an HTTP function outputting to a storage queue.

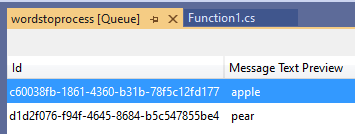

If we run this and submit a HTTP request containing the JSON { "words": ["apple", "pear"]} we get 2 messages added to the WordsToProcess queue.

What’s nice here is that because the command and handler return string[] Function Monkey has automatically “fanned-out” the data into multiple messages.When I was writing the code I was looking for specific ways to implement this which wasted some time. Really this is a nice intuitive way of handling IEnumerable return values.

So far so good. The next step is to read the messages from the queue, convert them to upper case, and then write out the blobs. This is unfortunately where I ran into some roadblocks.

First I defined command/hander:

public class ConvertToUpperCaseCommand : ICommand<string>

{

public string Word { get; set; }

}

internal class ConvertToUpperCaseHandler : ICommandHandler<ConvertToUpperCaseCommand, string>

{

public Task<string> ExecuteAsync(ConvertToUpperCaseCommand command, string previousResult)

{

return Task.FromResult(command.Word.ToUpperInvariant());

}

}

Then I tried to modify the config:

public class FunctionAppConfiguration : IFunctionAppConfiguration

{

public void Build(IFunctionHostBuilder builder)

{

builder

.Setup((serviceCollection, commandRegistry) =>

{

commandRegistry.Register<AddWordsHandler>();

commandRegistry.Register<ConvertToUpperCaseHandler>();

})

.Functions(functions => functions

.HttpRoute("v1/AddWords", route => route

.HttpFunction<AddWordsCommand>(HttpMethod.Post)

.OutputTo.StorageQueue("WordsToProcess")

)

.Storage(storageFunctions => storageFunctions

.QueueFunction<ConvertToUpperCaseCommand>("WordsToProcess")

.OutputTo.StorageBlob // StorageBlob does not exist

);

}

}

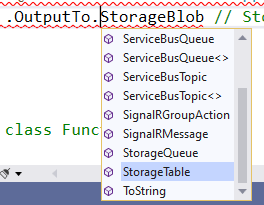

This is where I ran into a problem, I couldn't find an option to output to blob storage:

There are output bindings for storage queues and tables but not for blobs. I could of course be misunderstanding how to configure this.

To get around this I’m going to try and mix Function Monkey with traditional attributes by adding the following:

[FunctionName("ProcessWord")]

public static void ProcessWord(

[QueueTrigger("WordsToProcess")] string wordToProcess,

[Blob("uppercase-words/{rand-guid}")] out string uppercaseWord,

ILogger log)

{

log.LogInformation($"C# Queue trigger for word '{wordToProcess}'");

uppercaseWord = wordToProcess.ToUpperInvariant();

}

Now running the app and making an HTTP request results in the messages being added to the queue via Function Monkey and then the traditionally-specified ProcessWord function executes and writes to blob storage.

It’s nice that you can mix Function Monkey with the attribute-based function definition, though I don’t know if this is recommended or not and whether not there could by any unintentional side-effects.

The final part is the blob-triggered audit function.

Once again I’ll define a command and handler:

public class AuditCommand : ICommand, IStreamCommand

{

public Stream Stream { get; set; }

public string Name { get; set; }

}

internal class AuditHandler : ICommandHandler<AuditCommand>

{

public Task ExecuteAsync(AuditCommand command)

{

using (StreamReader reader = new StreamReader(command.Stream))

{

string name = reader.ReadToEnd();

// How to log name?

}

return Task.CompletedTask;

}

}

The version of a blob-triggered command allows us to get a Stream representing the blob data (simpler blob commands without streams are also available).

We can now wire this up:

public class FunctionAppConfiguration : IFunctionAppConfiguration

{

public void Build(IFunctionHostBuilder builder)

{

builder

.Setup((serviceCollection, commandRegistry) =>

{

commandRegistry.Register<AddWordsHandler>();

commandRegistry.Register<AuditHandler>();

})

.Functions(functions => functions

.HttpRoute("v1/AddWords", route => route

.HttpFunction<AddWordsCommand>(HttpMethod.Post)

.OutputTo.StorageQueue("WordsToProcess"))

.Storage(storageFunctions => storageFunctions

.BlobFunction<AuditCommand>("uppercase-words/{name}"))

);

}

}

Running this now results in the blob being written and the audit command handler executing. The original hander just output the blob to the log.

I’m not sure how to get access to the log in a handler, so I’m going to just add a constructor that takes an ILogger and see what happens:

internal class AuditHandler : ICommandHandler<AuditCommand>

{

private readonly ILogger log;

public AuditHandler(ILogger log)

{

this.log = log;

}

public Task ExecuteAsync(AuditCommand command)

{

using (StreamReader reader = new StreamReader(command.Stream))

{

string name = reader.ReadToEnd();

log.LogInformation($"C# blob trigger - audit for word '{name}'");

}

return Task.CompletedTask;

}

}

Running this and it just works, the ILogger is injected into the handler and the log message is output: C# blob trigger - audit for word 'APPLE'

There’s a lot more to Function Monkey by the looks of it such as DI, validation, etc.

The final code:

using System.Collections.Generic;

using System.IO;

using System.Linq;

using System.Net.Http;

using System.Threading.Tasks;

using AzureFromTheTrenches.Commanding.Abstractions;

using FunctionMonkey.Abstractions;

using FunctionMonkey.Abstractions.Builders;

using FunctionMonkey.Commanding.Abstractions;

using Microsoft.Azure.WebJobs;

using Microsoft.Extensions.Logging;

namespace FunctionApp2

{

public class AddWordsCommand : ICommand<string[]>

{

public IEnumerable<string> Words { get; set; }

}

internal class AddWordsHandler : ICommandHandler<AddWordsCommand, string[]>

{

public Task<string[]> ExecuteAsync(AddWordsCommand command, string[] previousResult)

{

return Task.FromResult(command.Words.ToArray());

}

}

public class AuditCommand : ICommand, IStreamCommand

{

public Stream Stream { get; set; }

public string Name { get; set; }

}

internal class AuditHandler : ICommandHandler<AuditCommand>

{

private readonly ILogger log;

public AuditHandler(ILogger log)

{

this.log = log;

}

public Task ExecuteAsync(AuditCommand command)

{

using (StreamReader reader = new StreamReader(command.Stream))

{

string name = reader.ReadToEnd();

log.LogInformation($"C# blob trigger - audit for word '{name}'");

}

return Task.CompletedTask;

}

}

public class FunctionAppConfiguration : IFunctionAppConfiguration

{

public void Build(IFunctionHostBuilder builder)

{

builder

.Setup((serviceCollection, commandRegistry) =>

{

commandRegistry.Register<AddWordsHandler>();

commandRegistry.Register<AuditHandler>();

})

.Functions(functions => functions

.HttpRoute("v1/AddWords", route => route

.HttpFunction<AddWordsCommand>(HttpMethod.Post)

.OutputTo.StorageQueue("WordsToProcess"))

.Storage(storageFunctions => storageFunctions

.BlobFunction<AuditCommand>("uppercase-words/{name}"))

);

}

}

public static class Function1

{

[FunctionName("ProcessWord")]

public static void ProcessWord(

[QueueTrigger("WordsToProcess")] string wordToProcess,

[Blob("uppercase-words/{rand-guid}")] out string uppercaseWord,

ILogger log)

{

log.LogInformation($"C# Queue trigger for word '{wordToProcess}'");

uppercaseWord = wordToProcess.ToUpperInvariant();

}

}

}

SHARE: