If you have some data in a web page but there is no API to get the same data, it’s possible to use (the often brittle and error prone) technique of screen scraping to read the values out of the HTML.

By leveraging Azure Functions, it’s trivial (depending on how horrendous the HTML is) to create a HTTP Azure Function that loads a web page, parses the HTML, and returns the data as JSON.

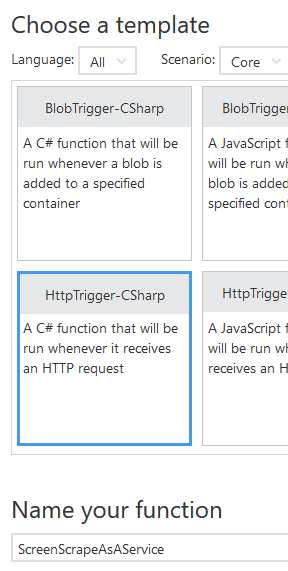

The fist step is to create an HTTP-triggered Azure Function:

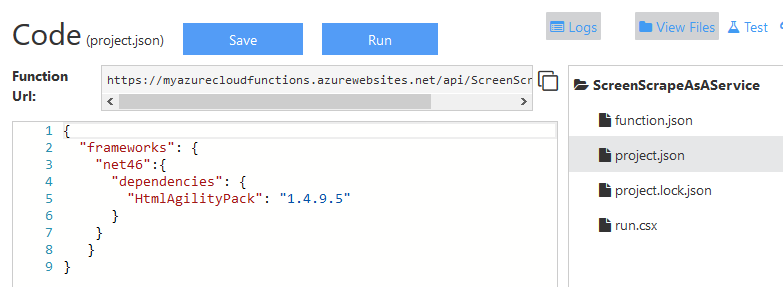

To help with the parsing, we can use the HTML Agility Pack NuGet package. To add this to the function, create a project.json file and add the NuGet reference:

{

"frameworks": {

"net46":{

"dependencies": {

"HtmlAgilityPack": "1.4.9.5"

}

}

}

}

Once this file is saved, the NuGet package will be installed and a using directive can be added: using HtmlAgilityPack;

Now we can download the required HTML page as a string, in the example below the archive page from Don’t Code Tired, use some LINQ to get the post titles, and return this as the HTTP response. Correctly selecting/parsing the required data from the HTML page is likely to be the most time-consuming part of the function creation.

The full function source code is as follows. Notice that there’s no error checking code to simplify the demo code. Screen scraping should usually be a last resort because of its very nature it can often break if the UI changes or unexpected data exists in the HTML. It also requires the whole page of HTML be downloaded which may raise performance concerns.

using System.Net;

using HtmlAgilityPack;

public static async Task<HttpResponseMessage> Run(HttpRequestMessage req, TraceWriter log)

{

HttpClient client = new HttpClient();

string html = await client.GetStringAsync("http://dontcodetired.com/blog/archive");

HtmlDocument doc = new HtmlDocument();

doc.LoadHtml(html);

var postTitles = doc.DocumentNode

.Descendants("td")

.Where(x => x.Attributes.Contains("class") && x.Attributes["class"].Value.Contains("title"))

.Select(x => x.InnerText);

return req.CreateResponse(HttpStatusCode.OK, postTitles);

}

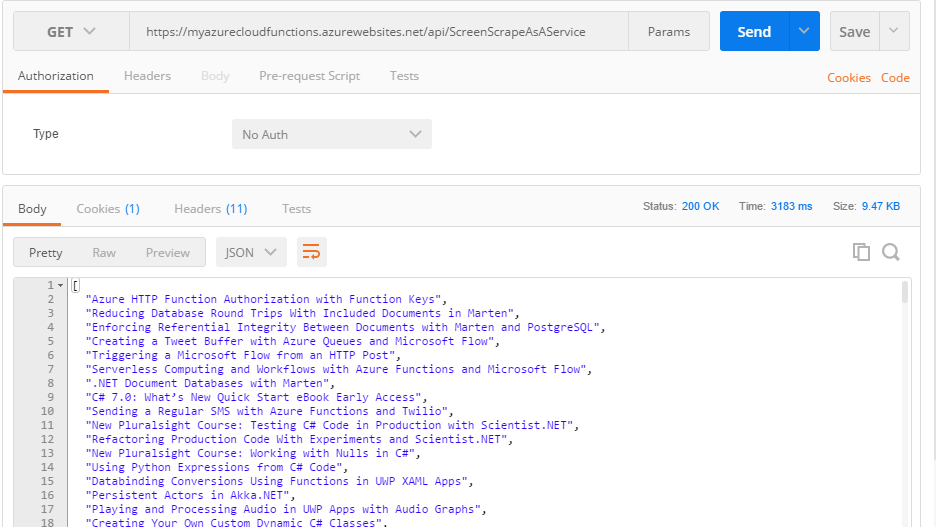

We can now call the function via HTTP and get back a list of all Don’t Code Tired article titles as the following Postman screenshot shows:

To jump-start your Azure Functions knowledge check out my Azure Function Triggers Quick Start Pluralsight course.

You can start watching with a Pluralsight free trial.

SHARE: