Cyclomatic complexity is one way to measure how complicated code is, it measures how complicated the structure of the code is and by extension how likely it may be to attract bugs or additional cost in maintenance/readability.

The calculated value for the cyclomatic complexity indicates how many different paths through the code there are. This means that lower numbers are better than higher numbers.

Clean code is likely to have lower cyclomatic complexity that dirty code. High cyclomatic complexity increases the risk of the presence of defects in the code due to increased difficulty in its testability, readability, and maintainability.

Calculating Cyclomatic Complexity in Visual Studio

To calculate the cyclomatic complexity, go to the the Analyze menu and choose Calculate Code Metrics for Solution (or for a specific project within the solution).

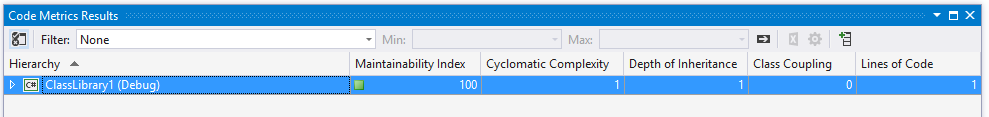

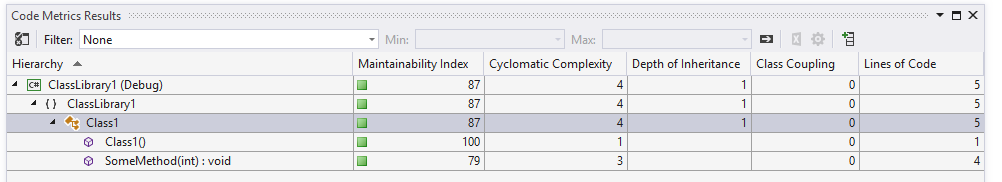

This will open the Code Metrics Results window as seen in the following screenshot.

Take the following code (in a project called ClassLibrary1):

namespace ClassLibrary1

{

public class Class1

{

}

}

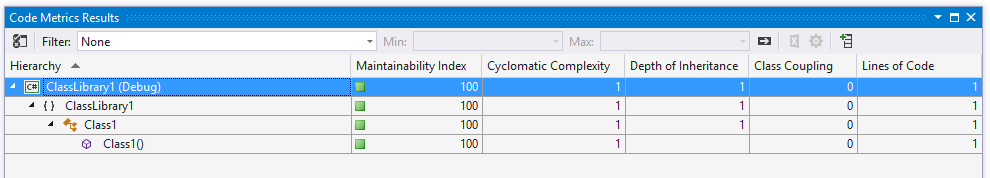

If we expand the results in the Code Metrics Window we can drill down into classes and right down to individual methods as in the following screenshot.

At present the cyclomatic complexity is 1. Let’s add a method to the class:

namespace ClassLibrary1

{

public class Class1

{

public void SomeMethod(int someInt)

{

}

}

}

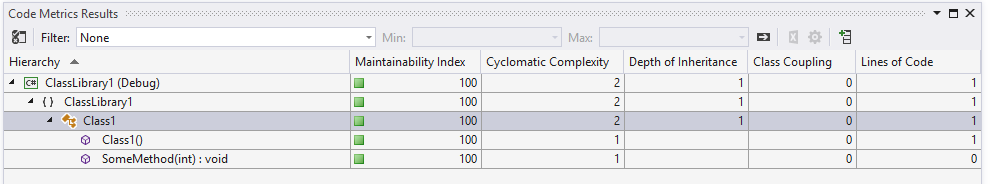

This increases the cyclomatic complexity for the project and class to 2 as in the following screenshot.

If we add an if statement inside this new method:

namespace ClassLibrary1

{

public class Class1

{

public void SomeMethod(int someInt)

{

if (someInt < 10)

{

//

}

else

{

//

}

}

}

}

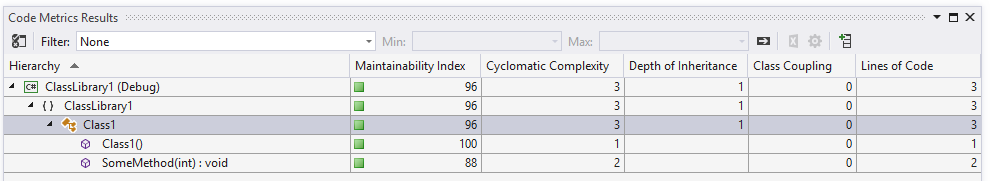

The complexity increases to 3 overall and SomeMethod now has a value of 2 – we have 2 different code paths through the method: one path if someInt is less than 10 and a second code path if the value is 10 or greater.

Let’s add a 3rd path:

namespace ClassLibrary1

{

public class Class1

{

public void SomeMethod(int someInt)

{

if (someInt < 10)

{

//

}

else if (someInt > 20)

{

//

}

else

{

//

}

}

}

}

Now the complexity for SomeMethod increases to 3 and the overall complexity increases to 4 as in the following screenshot.

If we create a really complex method now with multiple nested if statements:

public bool SomeComplexMethod(int age, string name, bool isAdmin)

{

bool result = false;

if (name == "Sarah")

{

if (age < 20 || age > 100)

{

if (isAdmin)

{

result = true;

}

else if (age == 42)

{

result = false;

}

else

{

result = true;

}

}

}

else if (name == "Gentry" && isAdmin)

{

result = false;

}

else

{

if (age == 50)

{

if (isAdmin)

{

if (name == "Amrit")

{

result = false;

}

else if (name == "Jane")

{

result = true;

}

else

{

result = false;

}

}

}

}

return result;

}

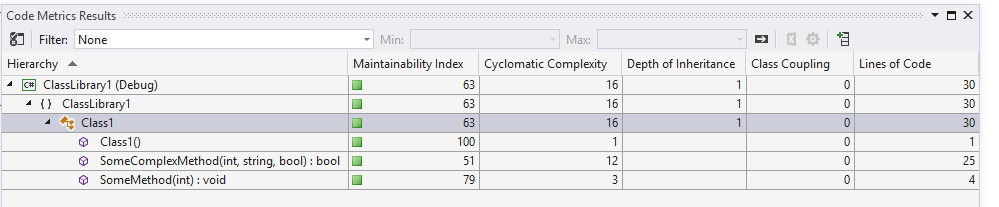

We get a complexity for the (unfathomable) SomeComplexMethod of 12 and the overall complexity increases to 16 as in the following screenshot.

Good Values

Whilst there is no single maximum “this value is always bad” limit, cyclomatic complexity exceeding 10-15 is usually a bad sign. We can see that SomeComplexMethod is impossible to reason about without expending great mental effort and this “only” has a complexity of 12.

Notice in the Code Metrics Results window we also get an overall Maintainability index which has a range of 0 to 100. Higher values indicate easier to maintain code: 20-100 = “good maintainability”; 10=19 = “moderate maintainability”; 0-9 = “low maintainability” [MSDN].

The SomeComplexMethod has a maintainability Index of 51 which equates to “good maintainability”, though I would ague against this description.

This point illustrates that while metrics are useful they shouldn’t be relied upon alone to judge the cleanliness of code. Metrics when used as a trend over time can give important insights, for example if six months ago a project had a cyclomatic complexity of 10 and now it has a value of 50, this indicates that further investigation is probably warranted.

Clean Code is a Combination of Factors

To sum up: clean, readable, maintainable code is a combination of factors including the use of things such as good names. The following code shows SomeComplexMethod with cryptic parameters and variable names:

public bool SomeComplexMethod(int a, string b, bool c)

{

bool r = false;

if (b == "Sarah")

{

if (a < 20 || a > 100)

{

if (c)

{

r = true;

}

else if (a == 42)

{

r = false;

}

else

{

r = true;

}

}

}

else if (b == "Gentry" && c)

{

r = false;

}

else

{

if (a == 50)

{

if (c)

{

if (b == "Amrit")

{

r = false;

}

else if (b == "Jane")

{

r = true;

}

else

{

r = false;

}

}

}

}

return r;

}

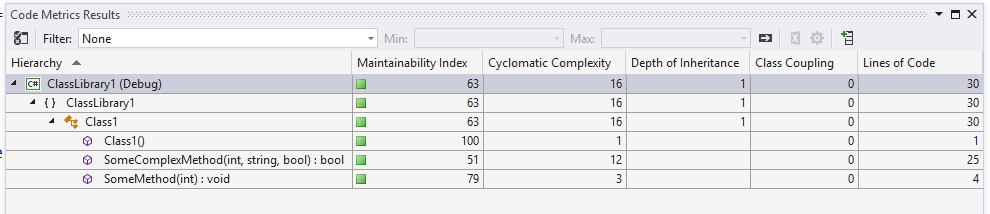

These terrible names don’t degrade the metrics, we still get a maintainability Index of 51 and cyclomatic complexity of 12, even though the method is even more unmaintainable now.

If you want to fill in the gaps in your C# knowledge be sure to check out my C# Tips and Traps training course from Pluralsight – get started with a free trial.

SHARE: